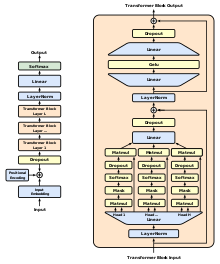

The machine learning model, GPT-1 or Generative Pre-training Transformer 1, is a creation of OpenAI, specifically engineered for the comprehension and generation of human language tasks. It features a 12-layer, decoder-only transformer structure, equipped with twelve 64-dimensional states masked self-attention heads. The optimization of GPT-1’s performance is achieved using the Adam optimization algorithm[1], which features a linearly increasing learning rate. With a remarkable 117 million parameters, GPT-1 showcases its intricate design. Despite its advanced structure, minimal adjustments are required when it’s deployed for different tasks. Its proficiency is particularly evident in natural language inference[2] tasks, question answering, commonsense reasoning, and semantic similarity tasks. One key resource for this model is the BookCorpus dataset, chosen for its lengthy passages that facilitate the management of long-range information.

This article may rely excessively on sources too closely associated with the subject, potentially preventing the article from being verifiable and neutral. (August 2023) |

Generative Pre-trained Transformer 1 (GPT-1) was the first of OpenAI's large language models following Google's invention of the transformer architecture in 2017. In June 2018, OpenAI released a paper entitled "Improving Language Understanding by Generative Pre-Training", in which they introduced that initial model along with the general concept of a generative pre-trained transformer.

| Original author(s) | OpenAI |

|---|---|

| Initial release | June 2018 |

| Repository | |

| Successor | GPT-2 |

| Type | |

| License | MIT |

| Website | openai |

Up to that point, the best-performing neural NLP models primarily employed supervised learning from large amounts of manually labeled data. This reliance on supervised learning limited their use of datasets that were not well-annotated, in addition to making it prohibitively expensive and time-consuming to train extremely large models; many languages (such as Swahili or Haitian Creole) are difficult to translate and interpret using such models due to a lack of available text for corpus-building. In contrast, a GPT's "semi-supervised" approach involved two stages: an unsupervised generative "pre-training" stage in which a language modeling objective was used to set initial parameters, and a supervised discriminative "fine-tuning" stage in which these parameters were adapted to a target task.

The use of a transformer architecture, as opposed to previous techniques involving attention-augmented RNNs, provided GPT models with a more structured memory than could be achieved through recurrent mechanisms; this resulted in "robust transfer performance across diverse tasks".