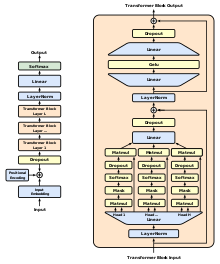

El modelo de aprendizaje automático, GPT-1 o Generative Pre-training Transformer 1, es una creación de OpenAI, específicamente diseñada para la comprensión y generación de tareas de lenguaje humano. Presenta una estructura de transformador de 12 capas, sólo decodificador, equipada con doce cabezas de autoatención enmascaradas con estados de 64 dimensiones. La optimización del rendimiento de GPT-1 se consigue mediante la optimización Adam algoritmo[1]que presenta una tasa de aprendizaje linealmente creciente. Con nada menos que 117 millones de parámetros, GPT-1 hace gala de su intrincado diseño. A pesar de su avanzada estructura, requiere ajustes mínimos cuando se utiliza para distintas tareas. Su destreza es especialmente evidente en el lenguaje natural. inferencia[2] tareas de respuesta a preguntas, razonamiento de sentido común y similitud semántica. Un recurso clave para este modelo es el conjunto de datos BookCorpus, elegido por sus extensos pasajes que facilitan la gestión de información de largo alcance.

Este artículo puede depender excesivamente de fuentes demasiado asociado al temaque puede impedir que el artículo sea verificable y neutro. (Agosto de 2023) |

Transformador generativo preentrenado 1 (GPT-1) fue el primero de OpenAI's grandes modelos lingüísticos siguiente GoogleLa invención del transformador arquitectura en 2017. En junio de 2018, OpenAI publicaron un artículo titulado "Improving Language Understanding by Generative Pre-Training", en el que presentaban ese modelo inicial junto con el concepto general de un transformador generativo preentrenado.

| Autor(es) original(es) | OpenAI |

|---|---|

| Lanzamiento inicial | Junio de 2018 |

| Repositorio | |

| Sucesor | GPT-2 |

| Tipo | |

| Licencia | MIT |

| Página web | openai |

Hasta ese momento, los modelos neuronales de PNL con mejores resultados empleaban principalmente aprendizaje supervisado a partir de grandes cantidades de datos etiquetados manualmente. Esta dependencia del aprendizaje supervisado limitaba su uso de conjuntos de datos que no estuvieran bien anotados, además de hacer que entrenar modelos extremadamente grandes resultara prohibitivamente caro y llevara mucho tiempo. Swahili o Criollo haitiano) son difíciles de traducir e interpretar mediante este tipo de modelos debido a la falta de textos disponibles para la creación de corpus. En cambio, el enfoque "semisupervisado" de GPT consta de dos etapas: una no supervisada y otra no supervisada. generativo etapa de "preentrenamiento" en la que se utilizó un objetivo de modelización lingüística para fijar los parámetros iniciales, y una etapa supervisada de discriminativo etapa de "ajuste fino" en la que estos parámetros se adaptaron a una tarea objetivo.

El uso de un transformador a diferencia de las técnicas anteriores que utilizaban RNN aumentadas por la atención. GPT modelos con una memoria más estructurada de lo que podría lograrse mediante mecanismos recurrentes; esto dio lugar a un "sólido rendimiento de transferencia a través de diversas tareas".